Opened at 2010-05-18T01:14:15Z

Closed at 2010-10-31T03:01:41Z

#1045 closed defect (fixed)

Memory leak during massive file upload (or download) through SFTP frontend

| Reported by: | francois | Owned by: | zooko |

|---|---|---|---|

| Priority: | critical | Milestone: | 1.8.1 |

| Component: | code | Version: | 1.6.1 |

| Keywords: | performance reliability upload download memory sftp unfinished-business | Cc: | francois@…, zooko |

| Launchpad Bug: |

Description (last modified by davidsarah)

Today, I copied about 12'000 files for a total size of about 52 GB into Tahoe with the SFTP frontend.

Here's what top has to say about the Tahoe process after this operation.

2765 francois 20 0 2059m 1.5g 2472 D 2 75.4 527:08.83 python

I will update this ticket as soon as I can gather more details.

David-Sarah Hopwood proposed to do the same test a second time via the wapi to help locate the leak.

Here is what Brian Warner proposed on IRC:

keep track of the process size vs time, with munin or a script that saves values and then graph them with gnuplot or something

I think tahoe's /stats WAPI will give you process-memory-size info

the idea is to do some operation repeatedly, measure process-space change while that's running, then switch to some other operation and measure that slope, and look for differences

'cp' to an SFTP-mounted FUSEish thing, vs 'tahoe cp' might be a good comparison

Attachments (9)

Change History (60)

comment:1 Changed at 2010-05-18T01:24:48Z by davidsarah

- Component changed from unknown to code

- Description modified (diff)

- Keywords memory sftp added

- Milestone changed from 1.8.0 to undecided

- Owner changed from nobody to somebody

comment:2 Changed at 2010-05-18T01:26:05Z by davidsarah

- Description modified (diff)

comment:3 Changed at 2010-05-18T02:19:31Z by zooko

comment:4 Changed at 2010-05-18T15:32:12Z by zooko

- Priority changed from major to critical

This is serious! If it happens when not using SFTP then it is a critical regression in Tahoe-LAFS. If it happens when using SFTP then it is a critical problem in the new SFTP support. :-/

comment:5 Changed at 2010-05-18T15:42:44Z by francois

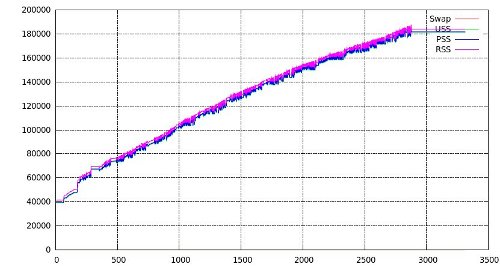

In both graphs, x axis is in 10 seconds increments and y is in bytes.

The first graph was generated by logging memory usage, as reported by smem during the upload of 53 GB of files with tahoe cp. Please note that the increase in memory usage (at x==4000) was due to the opening of a single file with SFTP during this process.

The second graph was generated by logggin memory usage during the upload of the same files through the SFTP frontend. The upload was stopped before it finished.

The conclusion I take from these graphs, is that the leak probably lies in the SFTP frontend.

Changed at 2010-05-18T15:47:36Z by francois

Changed at 2010-05-18T15:47:45Z by francois

comment:6 Changed at 2010-05-18T15:52:34Z by francois

- Summary changed from Memory leak during massive file upload to Memory leak during massive file upload through SFTP frontend

For the record, those were the scripts used to generated both graphs.

$ smem-log.sh 1 [t]ahoe-client.tac | tee /tmp/tahoe-sftp-smem.log

The gnuplot script.

set xlabel "seconds"

set ylabel "bytes"

plot "tahoe-sftp-smem.log" using 0:6 with lines title 'Swap', \

"tahoe-sftp-smem.log" using 0:7 with lines title 'USS', \

"tahoe-sftp-smem.log" using 0:8 with lines title 'PSS', \

"tahoe-sftp-smem.log" using 0:9 with lines title 'RSS'

smem-log.sh

#!/bin/sh

while :; do

SMEM=$(smem -P $2 -H)

if [ $? -eq 0 ]; then

echo "\"$(date)\" $SMEM"

fi

sleep $1

done

comment:7 follow-up: ↓ 10 Changed at 2010-05-18T19:47:53Z by zooko

François: what is the script used to initiate the 12,000 file uploads? There should be two scripts--one using tahoe cp and one using SFTP, right? I want to see how many files are being uploaded in parallel vs. in serial.

comment:8 follow-up: ↓ 9 Changed at 2010-05-18T19:50:04Z by zooko

"memory usage, as reported by smem" -- more specifically what does smem report here? resident/virtual-size/ ?

comment:9 in reply to: ↑ 8 Changed at 2010-05-18T19:51:49Z by zooko

Replying to zooko:

"memory usage, as reported by smem" -- more specifically what does smem report here? resident/virtual-size/ ?

Well fdz posted the script:

SMEM=$(smem -P $2 -H)

Oh, and I see the graph says "swap/PSS/USS/RSS".

comment:10 in reply to: ↑ 7 Changed at 2010-05-18T22:00:50Z by francois

Replying to zooko:

François: what is the script used to initiate the 12,000 file uploads? There should be two scripts--one using tahoe cp and one using SFTP, right? I want to see how many files are being uploaded in parallel vs. in serial.

The files were uploaded sequentially.

And, my bad, the values on the y axis are in kbytes and not bytes as mentionned in 5.

comment:11 follow-up: ↓ 12 Changed at 2010-05-19T01:42:06Z by davidsarah

It turns out that the SFTP code was logging all of the data going through it to the foolscap log. I've fixed that in the branch, so please pull and try again (if only to eliminate this as a possible cause of the leak).

Changed at 2010-05-19T11:20:20Z by francois

comment:12 in reply to: ↑ 11 Changed at 2010-05-19T11:21:56Z by francois

Replying to davidsarah:

It turns out that the SFTP code was logging all of the data going through it to the foolscap log. I've fixed that in the branch, so please pull and try again (if only to eliminate this as a possible cause of the leak).

Same test with latest code. The memory leak is probably somewhere else.

comment:13 Changed at 2010-05-19T12:59:39Z by zooko

One way to investigate this would be to take one of the SFTP unit tests and wrap it in a call to pyutil.memutil.check_for_obj_leakage() and make that be a new unit test.

The docstring of check_for_obj_leakage() explains how to (carefully) interpret its results.

comment:14 Changed at 2010-05-19T19:45:55Z by francois

- Summary changed from Memory leak during massive file upload through SFTP frontend to Memory leak during massive file upload (or download) through SFTP frontend

Further testing has shown that the leak can also be reproduced by downloading files from Tahoe (i.e. copying them from the SFTP server to the local system).

Changed at 2010-05-26T04:05:30Z by davidsarah

Graph of objects *possibly* leaked by an SFTP upload, and their backreferences

comment:15 Changed at 2010-05-27T16:41:49Z by davidsarah

Hmm, how do we know this is not the same bug as #891 ?

The difference between the behaviour of SFTP and tahoe cp doesn't necessarily imply that it isn't, because the SFTP implementation might be allowing more concurrency. François, are you absolutely sure that each SFTP upload (that is, the upload to storage servers, not just the gateway) is completing before starting the next?

comment:16 Changed at 2010-06-08T23:34:51Z by francois

OK, I've been running each SFTP unit test many times in a row to see how increase in memory usage was influenced by each test.

term1 $ trial -u allmydata.test.test_sftp.Handler.test_basic term2 $ while :; do smem -P test_basi[c] -H; sleep 10; done

Here are the results.

- All SFTP tests -> Leak

- test_basic -> No leak

- test_convert_error -> No leak

- test_execCommand_and_openShell -> No leak

- test_extendedRequest -> No leak

- test_makeDirectory -> No leak

- test_not_implemented-> No leak

- test_openDirectory_and_attrs -> No leak

- test_openFile_read -> Perhaps a very small leak (4.5 MB increase after ~30 iterations)

- test_openFile_write -> Huge LEAK (420 MB increase after 110 iterations)

- test_removeDirectory -> Small leak (about 3 MB increase after 100 iterations)

- test_removeFile -> Small leak (about 2.1 MB increase after 100 iterations)

- test_renameFile -> Small leak (5 MB increase after 100 iterations)

- test_renameFile_posix -> Small leak (4.5 MB increase after 100 iterations)

So, the main culprit is test_openFile_write!

comment:17 Changed at 2010-06-08T23:53:56Z by francois

Additional testing has shown that the major memory leak comes from the last part on test_openFile_write which begins in test_sftp.py line 887.

comment:18 Changed at 2010-06-09T09:40:30Z by francois

Yay! David-Sarah found the cause of the most significant leak in the SFTP frontend and fixed it in 3c44389440097dff.

There are still some minor leaks (two order of magnitude smaller) as mentioned in 16 but we can probably bear with them for 1.7.0-beta.

comment:19 Changed at 2010-06-09T09:40:57Z by francois

- Cc francois@… added

comment:20 Changed at 2010-06-09T14:16:21Z by zooko

I reviewed 3c44389440097dff and saw no problems.

comment:21 Changed at 2010-06-09T14:28:42Z by zooko

I guess we're leaving this ticket open to investigate the smaller ones after 1.7.

comment:22 follow-up: ↓ 23 Changed at 2010-06-11T19:23:50Z by davidsarah

- Keywords download added

- Owner changed from somebody to francois

François: can you reproduce the graph in comment:12 with current trunk?

Changed at 2010-06-12T12:59:14Z by francois

comment:23 in reply to: ↑ 22 Changed at 2010-06-12T13:08:00Z by francois

Replying to davidsarah:

François: can you reproduce the graph in comment:12 with current trunk?

Here is the result of the test with 1.6.1-r4477. The graph shows that the actual memory leak is not as small as I thought in comment:18. Perhaps functions that are still leaking memory are being called much more often than openFile_write in a typical SFTP session.

comment:24 Changed at 2010-07-17T06:08:12Z by davidsarah

- Milestone changed from undecided to 1.8.0

- Owner changed from francois to davidsarah

- Status changed from new to assigned

comment:25 Changed at 2010-07-20T04:59:14Z by zooko

- Keywords unfinished-business added

comment:26 Changed at 2010-07-24T05:34:20Z by zooko

This is a serious bug. It is correctly marked "critical" in Priority. What's the next step? I think it is either to use a tool like check_for_obj_leakage in a controlled deterministic unit-test style exercise of the SFTP front-end code, or else for someone to experiment with a live system and try to figure out what sorts of usage patterns trigger the memory consumption.

Any other ideas?

comment:27 Changed at 2010-08-09T21:23:42Z by zooko

- Cc zooko added

- Milestone changed from 1.8.0 to soon

comment:28 Changed at 2010-08-09T22:16:10Z by zooko

- Milestone changed from soon to 1.9.0

comment:29 follow-up: ↓ 33 Changed at 2010-10-22T13:11:12Z by zooko

In http://tahoe-lafs.org/pipermail/tahoe-dev/2010-October/005464.html François says that he also gets a memory leak during tahoe deep-check --add-lease --repair in addition to getting a memory leak while using the sftp frontend. Could there be two leaks? Or is it likely to be the same one in these two cases?

comment:30 Changed at 2010-10-22T16:02:41Z by warner

I think his email describes the leak here in #1045, but not necessarily in deep-check. The distinguisher will be if there are lots of MutableFileNodes hanging around. The #1045 leak appears to be related to making lots of changes to a single mutable file, whereas deep-check is going to be reading from lots and lots of files (probably mostly immutable, but possibly some mutable, depending upon how you use directories).

comment:31 follow-up: ↓ 32 Changed at 2010-10-22T16:06:46Z by warner

Oh, Francois, could you mention whether these uploads are all going to the same directory, or if they're going to a variety of directories? I think the "massive" qualifier in the title might best apply to the number of changes being made to a single directory, rather than the total size of the data being uploaded.

Looking at the difference between A) upload 10000 files of 1MB each, versus B) upload 10 files of 1GB each, would help distinguish between problems with large files and problems with large/churning directories. And uploading then all into one directory versus spread across 1000 directories would help distinguish between mutable-file-modification in general, and lots-of-generations on a single mutable file.

comment:32 in reply to: ↑ 31 ; follow-up: ↓ 34 Changed at 2010-10-23T00:03:12Z by davidsarah

Replying to warner:

... And uploading then all into one directory versus spread across 1000 directories would help distinguish between mutable-file-modification in general, and lots-of-generations on a single mutable file.

Please make this:

- upload all into the root directory (of the SFTP user);

- upload all into one subdirectory;

- upload spread across 1000 subdirectories.

This will test whether it is significant that a reference to the root dirnode is held for as long as the SFTP user session is logged in.

Changed at 2010-10-23T01:27:27Z by davidsarah

mutable/common.py: make the ResponseCache? only cache shares with the last-seen seqnum for a given storage index. No unit tests; do not apply to trunk yet.

comment:33 in reply to: ↑ 29 ; follow-up: ↓ 37 Changed at 2010-10-24T13:57:05Z by francois

Replying to zooko:

In http://tahoe-lafs.org/pipermail/tahoe-dev/2010-October/005464.html François says that he also gets a memory leak during tahoe deep-check --add-lease --repair in addition to getting a memory leak while using the sftp frontend. Could there be two leaks? Or is it likely to be the same one in these two cases?

To avoid mixing things up, I have created another ticket about this deep-check leak: #1229.

comment:34 in reply to: ↑ 32 ; follow-up: ↓ 35 Changed at 2010-10-24T14:41:11Z by francois

Replying to davidsarah:

This will test whether it is significant that a reference to the root dirnode is held for as long as the SFTP user session is logged in.

This is implemented in this commit. Unfortunately, it doesn't look sufficient to have ResponseCache correctly gc'ed.

comment:35 in reply to: ↑ 34 Changed at 2010-10-24T16:47:45Z by davidsarah

Replying to francois:

Replying to davidsarah:

This will test whether it is significant that a reference to the root dirnode is held for as long as the SFTP user session is logged in.

This is implemented in this commit. Unfortunately, it doesn't look sufficient to have ResponseCache correctly gc'ed.

This change looks correct, but I'd rather not make it if it doesn't solve the underlying problem.

comment:36 Changed at 2010-10-24T17:17:59Z by davidsarah

Discussion of whether the ResponseCache is needed: http://tahoe-lafs.org/pipermail/tahoe-dev/2010-October/005488.html

comment:37 in reply to: ↑ 33 ; follow-up: ↓ 39 Changed at 2010-10-24T20:07:13Z by davidsarah

- Keywords review-needed added

- Owner changed from davidsarah to somebody

- Status changed from assigned to new

Replying to francois:

Replying to zooko:

In http://tahoe-lafs.org/pipermail/tahoe-dev/2010-October/005464.html François says that he also gets a memory leak during tahoe deep-check --add-lease --repair in addition to getting a memory leak while using the sftp frontend.

The following test confirms that the size of the ResponseCache (not the number of entries, interestingly) increases every time we download a mutable file:

def test_response_cache_memory_leak(self):

d = self.nodemaker.create_mutable_file("contents")

def _created(n):

d = n.download_best_version()

d.addCallback(lambda res: self.failUnlessEqual(res, "contents"))

d.addCallback(lambda ign: self.failUnless(isinstance(n._cache, ResponseCache)))

def _check_cache_size(expected):

# The total size of cache entries should not increase on the second download.

d2 = n.download_best_version()

d2.addCallback(lambda ign: self.failUnlessEqual(len(repr(n._cache.cache)), expected))

return d2

d.addCallback(lambda ign: _check_cache_size(len(repr(n._cache.cache))))

return d

d.addCallback(_created)

return d

test_response_cache_memory_leak.todo = "This isn't fixed (see #1045)."

Could there be two leaks? Or is it likely to be the same one in these two cases?

I think we have no evidence for two independent leaks at this point (but we might need to fix the ResponseCache one in order to see whether there is anything else).

review-needed for the above test.

comment:38 Changed at 2010-10-24T20:50:20Z by zooko

pyutil's memutil.check_for_obj_leakage() might be useful, and so might memutil.measure_obj_leakage().

comment:39 in reply to: ↑ 37 Changed at 2010-10-26T00:21:53Z by francois

- Keywords review-needed removed

Replying to davidsarah:

The following test confirms that the size of the ResponseCache (not the number of entries, interestingly) increases every time we download a mutable file:

Well, the number of entries actually increases in the second dimension of the _cache array. The cache looks like that in a simplified way: _cache[verinfo][offset].

review-needed for the above test.

I reviewed your test and it looks good. I also actually tested it, when the cache is activated it fails and when the cache is deactivated (by commenting trunk/src/allmydata/mutable/servermap.py#L584) it passes.

comment:40 Changed at 2010-10-26T01:31:34Z by david-sarah@…

In 59e80b2ec92928bf:

Changed at 2010-10-27T05:00:30Z by davidsarah

make ResponseCache? smarter to avoid memory leaks: don't record timestamps, use DataSpans? to merge entries, and clear the cache when we see a new seqnum. refs #1045, #1229. (This supercedes only-cache-last-seen-seqnum.darcs.patch.)

comment:41 Changed at 2010-10-27T05:14:07Z by davidsarah

- Keywords review-needed added

- Milestone changed from 1.9.0 to 1.8.1

- Owner changed from somebody to warner

comment:42 Changed at 2010-10-29T08:23:04Z by warner

- Keywords review-needed removed

- Owner changed from warner to davidsarah

new-responsecache.darcs.patch looks great! r+ from me.

comment:43 Changed at 2010-10-29T19:43:13Z by david-sarah@…

In 4061258c85da2960:

(The changeset message doesn't reference this ticket)

comment:44 Changed at 2010-10-30T05:01:48Z by davidsarah

- Keywords news-needed added

- Owner changed from davidsarah to francois

francois: please repeat the graph of comment:12 and comment:23 with current trunk.

Changed at 2010-10-30T10:21:59Z by francois

comment:45 Changed at 2010-10-30T10:33:26Z by francois

This is much better now!

Thank you very much Brian and David-Sarah for your great work.

This following graph was produced during the upload of about 3.5 GB of audio files (between 1 MB and 10 MB in size) through the SFTP frontend. In this particular workload, a (small) leak of about 4 MB per hour is still visible.

I'd say that we can consider this issue closed but I'll continue to investigate this kind of issue and will open further tickets as necessary.

Changed at 2010-10-30T10:38:37Z by francois

comment:46 Changed at 2010-10-30T10:40:34Z by francois

- Keywords news-needed removed

- Owner changed from francois to davidsarah

Please find attached a ready-to-merge patch to the NEWS file.

comment:47 Changed at 2010-10-30T15:13:47Z by zooko

- Owner changed from davidsarah to zooko

- Status changed from new to assigned

comment:48 Changed at 2010-10-30T15:14:10Z by zooko

- Status changed from assigned to new

comment:49 Changed at 2010-10-30T15:14:19Z by zooko

- Status changed from new to assigned

comment:50 Changed at 2010-10-31T03:00:53Z by davidsarah

NEWS entry in 679c090c82cede16. We'll open a new ticket for the smaller leak.

comment:51 Changed at 2010-10-31T03:01:41Z by davidsarah

- Resolution set to fixed

- Status changed from assigned to closed

Also try the handy new tool "smem":

http://www.selenic.com/smem/

That process that you are reporting on is the gateway process, right? (I.e., the SFTP server and the Tahoe-LAFS storage client.)